@diego-tinelli c'è una teoria che è uscita in questi giorni secondo cui Google riesce a indicizzare le pagine con Javascript prendendo i dati dal tuo computer (un po come fa con i Core Web Vitals) https://x.com/Suzzicks/status/1841137385096044706 ... ovviamente è solo una teoria a cui non tutti danno credito https://x.com/pedrodias/status/1841415082053382550

KejSirio

@KejSirio

UX Designer e SEO Specialist. Grafica, comunicazione visiva, ottimizzazioni SEO

Post creati da KejSirio

-

RE: Bot e Java scriptpostato in SEO

-

RE: I Segreti dell'Algoritmo: analisi di documentazione tecnica interna di Google Search trapelatapostato in SEO

Aggiungo la mia opinione, non tanto professionale, ma quanto quella personale, perché mi sono ritrovato moltissimo nel video del ragazzo che ha scoperto il leak.

Mi occupo di web dal 2007, i siti si facevano ancora in ActionScript e non esisteva (o quasi) la SEO. Non penso di essere un allocco… eppure mi sono sentito esattamente come il ragazzo del video. Ho partecipato anche io agli incontri con Mueller (office hours) e mi sono fermato con lui a discutere anche dopo che veniva fermata la registrazione. Ho sempre apprezzato la pazienza e la calma con cui Mueller cercava di dare suggerimenti, e il suo impegno sembrava onesto e sincero. Proprio per questo mi sono sentito tradito, imbrogliato e poi, subito dopo, mi sono sentito stupido per aver creduto così facilmente a quello che diceva lui e tutti gli altri di Google. Sicuramente è stata una mia mancanza, e certo non si può incolpare Mueller per aver detto cose di cui, probabilmente, non sapeva abbastanza. Potrebbero anche averlo fatto in maniera conscia, per nascondere informazioni e tediare la comunità SEO su argomenti non rilevanti, ma la mia impressione è che le frasi di Mueller non fossero dissimili da quanto potrebbe dire/generare una AI (concetti generici, frasi improbabili e tante allucinazioni). A dimostrazione della incapacità di Mueller c’è anche la storia recente dei suoi Office Hours: sono stati chiusi! Hanno provato a fare qualche podcast, in cui spesso e volentieri, Sullivan passava il tempo a blastare Mueller, dicendogli che non ne capiva niente, ma anche questi podcast sono stati interrotti/ridotti poco tempo dopo.

Sappiamo tutti che Google è una grande azienda che persegue i suoi (malefici?) obiettivi, per cui non c’è da stupirsi se cercano di fare i loro interessi… credo invece che siamo noi a dover avere la voglia di creare un Web che sia accessibile, paritario, che premi il merito e l’impegno di ognuno, senza dover subire i ricatti di un monopolista che modifica i suoi strumenti per mantenere una posizione dominate.

Concludo con un pensiero personale: quando qualcosa non va secondo le nostre aspettative è normale arrabbiarsi e sentirsi delusi, ma il sentimento successivo è quello di ripartire più forti di prima, anche se ci sentiamo come Davide contro Golia, anche se le nostre armi sono spuntate e la lotta sembra impari… a volte, dalle necessità dei singoli si possono ottenere grandi risultati che miglioreranno la vita di tutti! -

RE: Per una Intelligenza Artificiale Europeapostato in Intelligenza Artificiale

@giulio-marchesi grazie per la risposta e per il tempo che hai dedicato alla questione. Vale moltissimo, credimi!

Mi devo scusare per essere stato poco chiaro nella presentazione; l'obiettivo che abbiamo in mente non sono molto le "regole per l'I.A.", che sono un campo già molto battuto, sul quale anche la UE ha una legislazione in fase di approvazione, ma un intelligenza artificiale pubblica europea, al servizio dei cittadini e della democrazia.

-

Per una Intelligenza Artificiale Europeapostato in Intelligenza Artificiale

Con un gruppo di attivisti/e stiamo pensando di proporre una ECI (European Citizen Initiative) sul tema dell'intelligenza artificiale. Mi piacerebbe molto sapere quali sono le vostre idee e le vostre preoccupazioni su questo argomento.

Tenete presente che è una bozza ancora molto embrionale e proprio per questo vorrei l'opinione di un gruppo di esperti come voi. Senza entrare in tecnicismi, quali sono i principi/etiche che vorreste fossero assicurati in una AI Europea? Quali sono i pericoli che bisogna assolutamente evitare?Title: For a European Artificial Intelligence: open and at the service of democracy

We ask the Commission to develop an open artificial intelligence system, at the service of the right to knowledge and democracy.

We call for the creation of a "Large Language Model" system that is open source, trained on the basis of the documents of the public administrations of the Member States and the EU, such as legislation, case law, cultural and scientific heritage...An application will consist of a virtual assistant to help citizens in their dealings with the EU and states. The service will improve knowledge of the rules and facilitate democratic participation, allowing everyone to interact in simple and not necessarily technical language. The model will also be able to be integrated upstream in the regulatory process, assisting the legislator in the writing of rules.

REASONS

The recent advances shown by generative AI, especially Large Language Models (LLM), suggest a huge impact on productivity. In "The economic potential of generative AI" McKinsey estimates the value generation potential for the industry at between 2.6 trillion and 4.4 trillion.

Among the various risks accompanying the diffusion of these technologies are that of increased inequality due to the possibility or otherwise of access and that implicit in the oligopoly of model development and ownership. As LLMs spread, integrating their functions into a multitude of products, often imperceptibly to the end user, the possibility of having transparent control over the models and training sets used for training will become increasingly important in order to better understand how the system works.

The Commission's contribution is necessary because LLMs have been shown to improve performance as they increase in size, and the cost of training increases accordingly; for example, OpenAI CEO Sam Altman told the press that GPT-4 training cost more than $100 million. It seems clear, therefore, that in order to be internationally competitive, investments of at least this order of magnitude are needed, which are more easily achieved on a European scale.The governance of the management and development of the model must reduce the risk of interference by commercial interests or state control and can be achieved through a non-profit organisation or foundation.

-

RE: Alta volatilità Serp Google - Luglio 2023postato in SEO

Dalla newsletter Fattoretto di ieri: Analizzando alcune keyword transazionali, notiamo che dal quarto/sesto risultato (il dato può variare a seconda della SERP esaminata) inizia il carosello di Google Shopping. Di conseguenza, i risultati organici sono spostati più in basso.

-

Alta volatilità Serp Google - Luglio 2023postato in SEO

Voi state notando fluttuazioni nelle Serp Google in questi giorni?

https://www.seroundtable.com/google-search-volatility-break-rank-tracking-tools-35723.html

Barry dice che tutti i sensori sono impazziti, ma il suo personale sensore (le chat Seo) non stanno registrando questi effetti cosi dirompenti, almeno non ancora.

Ipotesi 1: ci sono in corso numerosi esperimenti nelle Serp; ipotesi 2: c'è aria di core update; ipotesi 3: sto sbagliando io qualcosa... anche se non è così, io me lo chiedo sempre... non si sa mai XD -

RE: Google Analytics è illegale in EU?postato in Google Analytics e Web Analytics

@kal e quindi? Da domani Analytics legale anche in EU?

-

RE: GA4 arriverà a monitorare le pagine AMP?postato in Google Analytics e Web Analytics

A soli due giorni dallo switch-off di Universal... https://www.seroundtable.com/google-analytics-4-now-supports-amp-pages-35625.html ... GA4 è arrivato a monitorare le pagine AMP.

Qui la documentazione ufficiale: https://support.google.com/analytics/answer/13707678

A quanto pare hanno fatto una copia precisa (compresa di bug) del lavoro fatto da David Vallejo, senza dare un minimo di credito... peccato. -

RE: Sorgente/mezzo campagne Ads su GA4postato in Google Analytics e Web Analytics

@kal grazie della risposta. Si GA4 e GAds sono collegati e adesso capisco perché li aggrega in questo modo (tvb perché riesci a spiegare bene con così poche parole).

Sono d'accordo sulla "pessima idea" di usare parametri custom... la spiegazione è che il reparto marketing è abituato a distingue i funnel di acquisizione basandosi sulla sorgente/mezzo... ma se su GA4 vengono "sovrascritti" non ha più senso usarli. Sto cercando di farglieli cambiare, ma come sai... il cambiamento è difficile da accettare.

Ultima cosa, anche la "campagna sessione", anziché usare il parametro utm_campaign, viene sovrascritta con il Nome della campagna su Ads... immagino per lo stesso motivo (collegamento GA4 e GAds).

Mi rimane solo il dubbio se l'acquisizione basata sull'ultimo click possa risolvere queste incongruenze. -

Sorgente/mezzo campagne Ads su GA4postato in Google Analytics e Web Analytics

Ciao a tutti, ho già letto https://connect.gt/topic/249110/ga4-e-sorgente-mezzo e https://connect.gt/topic/250290/traffico-derivante-principalmente-da-sorgente-direct-quali-spiegazioni ma non riesco ancora a trovare la soluzione a questo problema... magari qualcuno mi può aiutare.

Praticamente ho delle campagne Ads in cui metto come utm_source="googleads" e utm_medium="paid"... ma quando arrivano in GA4 hanno sorgente="google" e mezzo="cpc". Le stesse sorgenti di acquisizione sono invece tracciate correttamente sul vecchio UA3.

Non riesco a capire se è legato al nome che utilizzo nella sorgente (googleads anziché google) oppure se ci sono altri motivi. Ho impostato il modello di attribuzione basato sui dati (cosigliato) ma ho il dubbio se cambiarlo con Ultimo Click (preferito da Ads). Avete qualche consiglio a riguardo?

Grazie a chi mi vorrà rispondere

-

Ding Liren: il nuovo campione del mondo di Scacchipostato in GT Fetish Cafè

Per chi non lo conoscesse:

Ding Liren è il campione mondiale di scacchi FIDE in carica dopo aver sconfitto il GM Ian Nepomniachtchi nel Campionato del Mondo del 2023. Come la maggior parte dei campioni del mondo, una combinazione di dominio e situazioni difficili hanno definito la carriera di Ding fino alla conquista del titolo.

Ding ha vinto il suo primo Campionato Cinese di Scacchi all'età di 16 anni, diventando il più giovane di sempre a farlo. Nel 2017 e nel 2019, nella Coppa del Mondo di Scacchi, è diventato il primo giocatore nella storia a raggiungere per due volte consecutive la finale. Ai suoi tre titoli cinesi si uniscono due medaglie d'oro a squadre e una medaglia d'oro individuale alle Olimpiadi degli Scacchi (oltre a una medaglia d'oro a squadre ai Campionati del Mondo a Squadre).

Dal agosto 2017 al novembre 2018, Ding ha mantenuto una striscia di imbattibilità di 100 partite in competizioni di scacchi di alto livello, la più lunga della storia fino a quando il GM Magnus Carlsen non l'ha interrotta nell'ottobre 2019. Nel 2018, Ding è entrato nei primi cinque giocatori di scacchi al mondo (a maggio) e ha superato il punteggio Elo di 2800 (a settembre), e rimane in quelle categorie ancora oggi.

I giornali italiani invece fanno click baiting mettendo in evidenza la sua passione per la Juventus

https://www.gazzetta.it/Calcio/Serie-A/Juventus/02-05-2023/campione-mondo-scacchi-ding-liren-tifo-juve-verro-presto-allianz-4601390917087.shtml

https://www.open.online/2023/04/30/campione-mondo-scacchi-cinese-fan-juventus/

https://www.fanpage.it/sport/calcio/liren-diventa-campione-del-mondo-di-scacchi-e-ha-un-solo-desiderio-voglio-andare-a-vedere-la-juve/ -

RE: Traffico derivante principalmente da sorgente "direct": quali spiegazioni?postato in Google Analytics e Web Analytics

Se ho capito bene, l’utilizzo di termini “custom” negli UTM potrebbe alterare la sorgente di traffico? Io sto notando una notevole discrepanza tra alcune Sorgenti UA3 (googleads e google_shopping) che in GA4 vengono segnate con sorgente Google e mezzo Cpc... potrebbe essere la stessa problematica riscontrata da @lucaf7? O sto sbagliando io qualcos'altro?

-

RE: Disavow Google Togliere filepostato in SEO

Il disavow è un suggerimento di "disconoscimento" ma non è detto che venga rispettato. Google potrebbe prenderlo in considerazione oppure no (a mia esperienza no). Hai mai comprato/scambiato backlink? In questo caso ti tocca fare il doppio lavoro e disconoscere tutti i domini malevoli attraverso il disavow. Altrimenti non fare niente, ricarica il file di disavow vuoto e dimenticati di quello strumento.

P.s. Se hai usato strumenti tipo Semrush o aHrefs puoi tranquillamente buttare tutto... l'unico minimamente affidabile e Search Console. Se voglio sapere cosa sà Google dei backlink al mio sito, vado direttamente alla fonte, o no?

Ultimo Hint: io uso anche gli Alert che mi avvisano appena Google trova una citazione al mio sito. Me li metto in un excel e li ricontrollo una volta al mese... se dopo 6 mesi sono ancora li, posso valutare se metterli in disavow.

Spero di esserti stato utile -

RE: La nuova influencer di Italia.it: la Venere Turista. Cosa ne pensate?postato in Web Marketing e Content

Non è male, l'idea è buona e, come sottolinea @esteban, è "lungimirante" (si spera).

Quello che non mi piace è il mix linguistico nel nome della campagna, su 4 parole 2 sono in italiano e 2 in inglese. Non sono per l'italiano a tutti i costi, ma questo costringe il cervello, anche quello di un bilingue, a cambiare registro di lettura... insomma non una scelta felice. Il sito lo trovo molto simile ad un noto portale di affitta camere...niente di entusiasmante. Belle invece le impostazioni sull'accessibilità che sono veramente TOP. -

RE: Ma ci credete davvero a questa roba? :)postato in Intelligenza Artificiale

Mi collego al titolo di questa discussione per segnalarvi questa previsione trovata qui: https://www.mattprd.com/p/the-complete-beginners-guide-to-autonomous-agents

"Here are my predictions for the future of autonomous agents:

2023 multiple commercialized autonomous agents for gaming, personal use, marketing, and sales.

2024 commercialized autonomous agents for every category but not mainstream adoption.

2025 mainstream adoption of autonomous agents in every category for everything imaginable.

2026 most people in first-world countries are going about every day life with the support of an army of autonomous agents."

Qualcuno evidentemente ci crede davvero! Qui una demo "relativamente" funzionante https://agentgpt.reworkd.ai/ -

RE: Voi come vi aggiornate? Io così...postato in Internet News

Io seguo Schwartz, anche se è un pò il Dagospia della SEO, trovo che il suo daily recap sia molto utile per tenere sott'occhio le novità di settore. Mueller, sia su Twitter che su Mastodon, anche se dà sempre risposte molto generiche (es: il sito funziona bene se è fatto bene...). Vari canali Reddit e alcuni forum americani, più ovviamente la newsletter di @giorgiotave che è sempre precisa e accattivante. Non uso per niente Substack, ma credo sia una mia mancanza/riluttanza nel non voler usare questi aggregatori.

-

Tasso di coinvolgimentopostato in Google Analytics e Web Analytics

Ciao a tutti, tra le impostazioni di GA4 si trova questo configuratore per gestire un "timer" delle sessioni con coinvolgimento. Se non ricordo male, nelle vecchie proprietà Analytics, era comune evitare di influenzare il tasso di coinvolgimento con questi timer, ma con il nuovo GA4 ce lo troviamo tra le impostazioni... già bello e pronto... e devo dire che funziona abbastanza bene.

Avendo numerose visite su pagine AMP ho deciso di ricreare un timer anche li, ma i risultati sono notevolmente diversi. Sulle pagine NON Amp il coinvolgimento è intorno al 40%, su quelle AMP con questa modifica sul timer, sono arrivato al 95%.

Ho seguito la guida dell'ottimo David Vallejo -> https://www.thyngster.com/how-to-track-amp-pages-with-google-analytics-4 e sono in attesa dei nuovi sviluppi annunciati qui -> https://www.seroundtable.com/google-amp-support-google-analytics-4-35114.htmlAvete qualche altro suggerimento? Cosa ne pensate dell'utilizzo del timer per valutare le sessioni con coinvolgimento?

-

RE: Test SEO: rimozione tramite X-Robots-Tag di una pagina bloccata da robots.txtpostato in SEO

@kal grazie per le rassicurazioni, sei sempre gentile e preciso.

La mia preoccupazione è che veda un aumento di contenuti "poco utili" e mi possa penalizzare in qualche altro modo. Stiamo parlando di 900mila pagine "bloccate ma indicizzate" su un totale di 1,6Mln -

RE: Test SEO: rimozione tramite X-Robots-Tag di una pagina bloccata da robots.txtpostato in SEO

@kal se per link esterni intendi altri domini che puntano a quelle pagine, no (almeno che io sappia). Dall'applicazione invece sono raggiungibili da tutti gli utenti che usano i filtri a faccette. Forse è proprio questo il motivo per cui il Crawler ci è entrato fregandosene delle regole robots. Sto valutando anche di usare rel="nofollow" sui link, proprio per rafforzare il blocco.

Per fortuna non ci sono impression su GSC e nelle Serp ti confermo che questi risultati vengono spesso nascosti in "sono state omesse alcune voci molto simili", oltre a essere senza Title e Description

-

RE: Test SEO: rimozione tramite X-Robots-Tag di una pagina bloccata da robots.txtpostato in SEO

Bello, interessantissima questa discussione. Bravo @kal per l'ottima esposizione e grazie @sermatica per il video.

Prendo spunto dalle ultime parole di Francesco Margherita per raccontarvi la mia esperienza personale.

Probabilmente sto sbagliando io qualcosa perché, in coincidenza di un aumento di Scansioni, sono aumentate notevolmente anche le pagine "indicizzate ma bloccate da robots". Nel mio caso si tratta di incroci multipli generati da filtri a faccette che storicamente ho sempre bloccato via robots... invece google ci è entrato alla grande, ignorando del tutto le regole di disallow (come nell'esempio di yelp) e adesso sto valutando di metterle in noIndex.

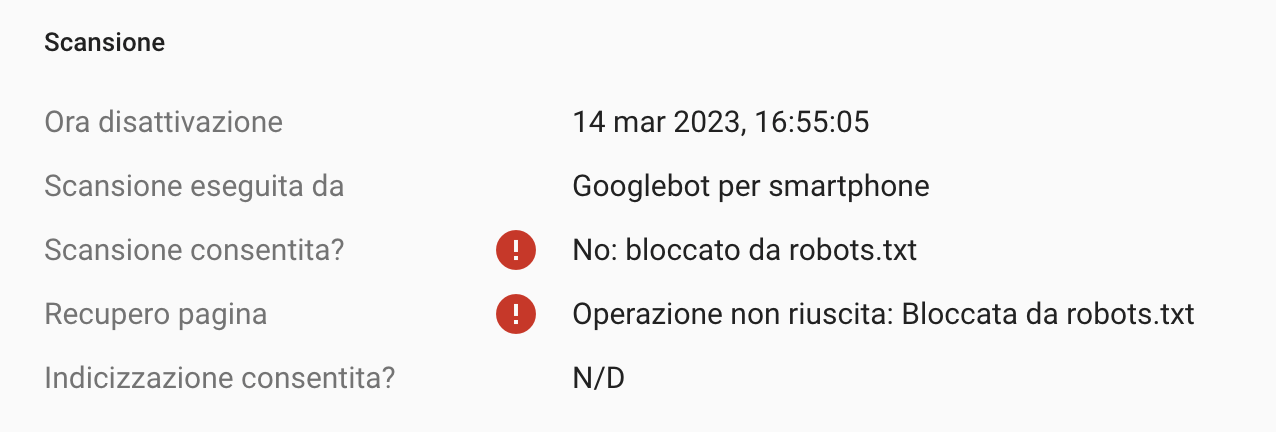

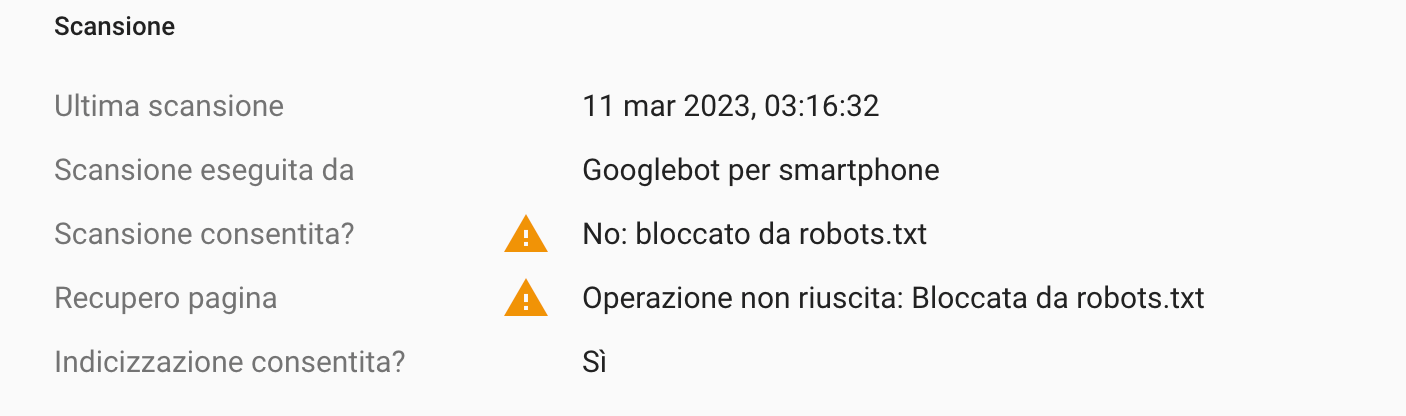

Confermo invece che nelle Serp questi risultati sono SENZA Title e DescriptionQuesta è una delle pagine che risulta indicizzata ma bloccata da robots. Come è possibile che "Scansione consentita = no" e "recupero pagina = bloccata da robots" e poi invece "indicizzazione consentita: SI"?!?!?

mentre questo è il test in tempo reale